Tesla Model 3: Autopilot Is Awesome and Fraught

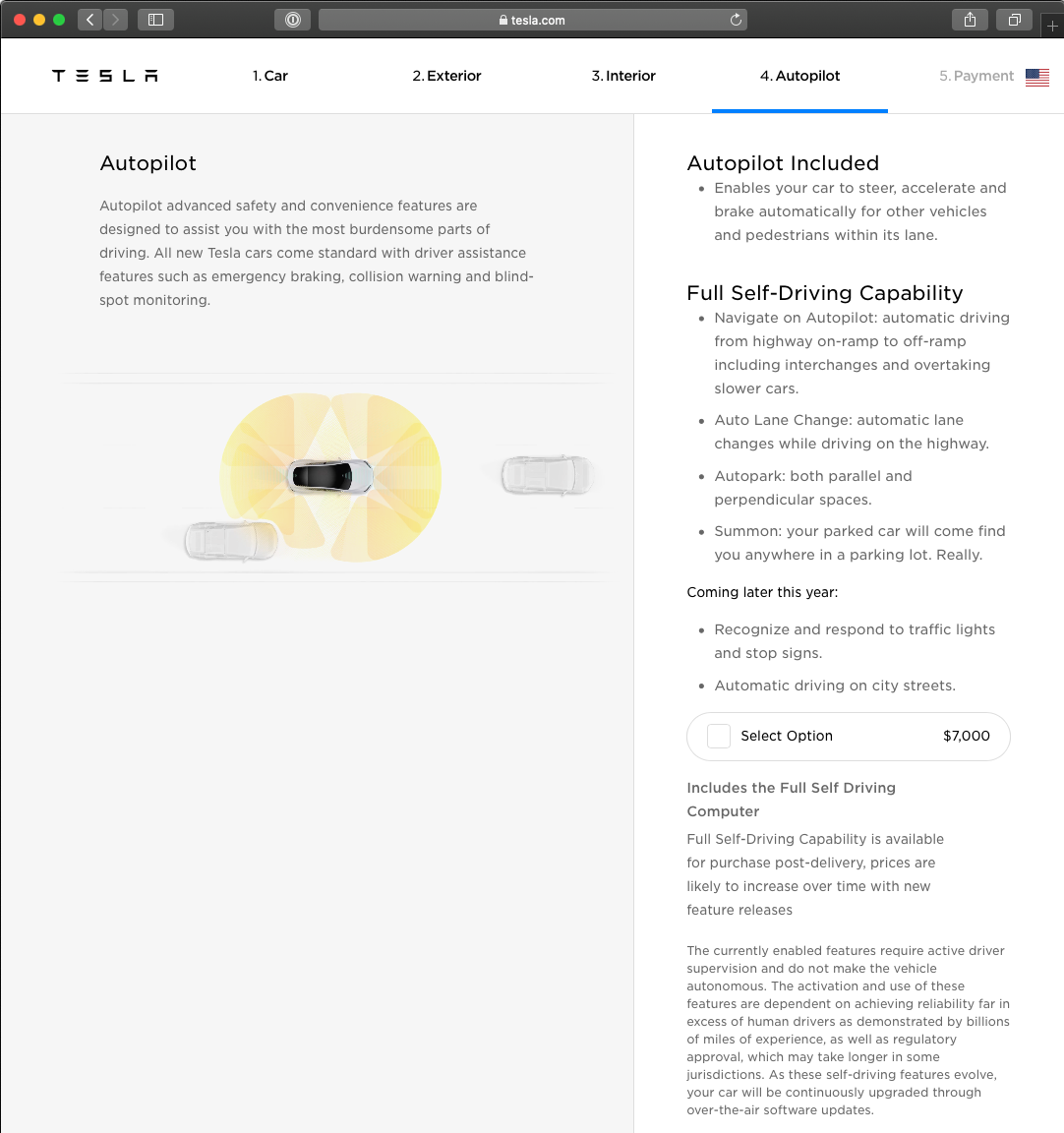

In previous posts (here and here), I’ve described by experiences with my Model 3. After driving it over 9,000 miles, I still love the car. In this post, I focus on what Tesla brands as both Autopilot and Full Self-Driving Capability. All Model 3s include some features, but you must buy the $7,000 “Full Self-Driving Capability” option, to get the most interesting features.

The Sales Pitch

Tesla’s online ordering system gives this concise description:

The Big Questions

I’m going to examine the features one at a time, trying to answer three big questions for each feature:

Does it work?

Is it useful in real life?

Is it safe?

I can only give you my impressions based on my particular experiences. My answers are weakest when it comes to safety because only data drawn from hundreds of millions of miles driven by many drivers in many cars on many roads can give a complete picture. But, I will explain situations where I’ve felt threatened by some action the car took and other situations where the car might very well have prevented an accident.

Survey Data

Bloomberg News published in November 2019 the results of surveying 5,000 Model 3 owners about Autopilot safety. This is worth reading, giving a much broader range of opinion than I can give. Over 90% of survey respondents feel that Autopilot makes them safer. But this survey, too, is just anecdote and opinion, not conclusive data.

Accident Data

Tesla releases quarterly high-level accident data aggregated across all of its cars on the road, not just Model 3. The 4Q19 data showed one accident per 3.07 million miles driven while Autopilot was engaged compared to one accident per 2.10 million miles driven while Autopilot was not engaged. Even without Autopilot, late-model Tesla cars have active safety features that are still relatively rare among cars on the road. Tesla reports that National Highway Transportation and Safety Administration shows one accident per 479,000 miles driven.

There are two implications Tesla probably hopes we draw from this data:

Active safety features included in all late-model Tesla cars reduce accidents.

Driving a Tesla with Autopilot engaged is even safer.

I find both plausible, but Tesla hasn’t presented detailed enough data to be certain.

As we go through various self-driving capabilities, I’ll give examples when my Tesla made me less safe and examples when it made me safer. The balance matters and there’s not enough data to understand that balance.

Traffic-Aware Cruise Control

What is It?

The idea is simple: You tell the car how fast you want to go and it does it, slowing down or stopping if there’s a slower-moving or stopped car, bike, or person in your lane.

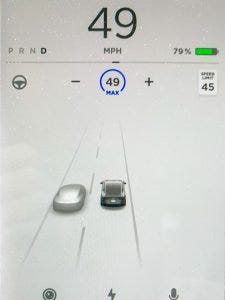

To simplify the process, the car knows the speed limit in most areas, as illustrated in the screen capture. (It is usually correct, but it is clearly getting the speed limit from the nav system not by reading signs.) You can set an offset to the speed limit and whenever you turn on cruise control, it will set the speed to the higher of your current speed, or the speed limit plus offset. For example, I’ve set my offset to 4, so in a 45 mph zone, cruise control will keep the car at 49 mph, unless, of course, a vehicle in front of me is going more slowly.

If the vehicle in front stops, the Tesla stops. When the vehicle in front resumes, the Tesla resumes, generally smoothly and appropriately.

Does it Work? Is it Useful?

Absolutely. I use the cruise control much of the time I’m driving. Stop-and-go traffic is not so bad when the cruise control is doing the stopping and going. Similarly, it is great to prevent speeding past bottom-of-the-hill, hidden-around-the-bend radar traps.

More importantly, cruise control saves you from panic stops (or worse) when your attention wanders, and it reacts quicker than you can to sudden stops in front of you. Several times, I’ve started to stomp on the brake, only to realize that cruise control has already started braking.

Is it Safe?

As I explained earlier, only extensive data can answer this question conclusively. But, I believe I am safer driving with cruise control than without it because it reacts more quickly than I can and its attention never wanders.

Unexpected Braking

That said, it has one flaw that could be a safety hazard and is certainly disconcerting: Occasionally, the car will brake hard in situations in which no human driver would do so, setting up the possibility of a tailgater ramming into the Tesla’s rear end. I’ve seen this behavior in three situations:

Another car crosses in front of me sufficiently far away that a human driver would not brake for it. It seems that the cruise control does not “understand” that the car that appears to be an obstacle will be long gone before I get there.

A car in front of me slows and moves into a right-hand-turn lane. Often the cruise control will brake for this car even though I know that it will be in the turn lane before I get there.

Traveling at speed on an interstate highway, the car will occasionally brake hard for no apparent reason. This is disconcerting and possibly dangerous. It seems to happen when cresting a hill and there’s one of those big, green, interstate highway signs across the road at the top of the hill. A human knows that the sign is way above the roadway, but I can understand that it doesn’t look that way from just below the top of the hill.

When the car brakes for no good reason like this, it does respond to the accelerator so it is easy to correct, hopefully before it causes a problem.

Remember To Brake for Lights and Stop Signs

One very real danger is that you must remember to brake for red lights and stop signs. The car doesn’t do it on its own. Of course, if there’s a car in front of you, it stopping will stop you. After driving for a while in stop-and-go traffic with cruise control, it is easy to become complacent about lights because most of the time there will be a car in front.

Interestingly, Tesla is working on that. A software update about two months ago added “visualization” of traffic lights and their current color. Although Tesla didn't say so, this is clearly a step toward delivering the first item on their “delivering later this year” list in the online ordering page shown above.

Autosteer

What is It?

Autosteer is true to its name: it steers the car, staying in your lane. Combined with cruise control, the car handles some of the most mundane aspects of driving.

Tesla warns that you are responsible for driving and that you must be attentive when using Autosteer. If the car detects that you are inattentive — it expects you to make small movements of the steering wheel — it first puts a gentle reminder to make small motions of the steering wheel, then flashes part of the screen blue, and eventually makes a piercing sound that will wake you from your slumber. If it has to do this often enough, it prohibits you from using Autosteer for a while.

Does it Work? Is it Useful?

It works well. My experience even on roads with faded lane markings has been good. The one exception is during heavy rain, when it sometimes warns of poor weather and shuts off. It occasionally warns that a particular camera is blocked, which seems to happen either from rain or when it is blinded by the sun.

Autosteer works, but is unhelpful on local roads.

It is more useful for highway driving. In particular, it reduces tedium on long drives helping me to drive longer without fatigue.

If you also purchase full self-driving capabilities, Autosteer becomes much more useful on the highway. I’ll talk about that below.

Is it Safe?

I haven’t had any really bad experiences with Autosteer. At first, it seemed to drive too far right in the lane. Then I discovered that it drives centered in the lane and I always drive left of center, something I never realized about my own driving.

Of course, if Autosteer does something you don’t like, you can take control just by starting to steer. You’ll feel a little resistance at first, but then there’s a bell sound and Autosteer shuts off and you’re steering as usual.

In one particular situation, Autosteer sometimes does something disconcerting, if not unsafe: When traveling in the right lane of a highway just past an entrance ramp coming in from the right, the car will sometimes pull to the right, toward the curved lane marking from the entrance ramp, as indicated by the green arrow. I’ve never felt in danger from this, but I have several times overridden Autosteer in this situation. Of course, I don’t know what would have happened had I not taken control.

Autopark

What is it?

Purchasing the “full self-driving capability” adds automated parking: Pull up to a parallel or a perpendicular (not angled) parking spot, the car indicates the space on the screen, you touch it, put the car in reverse, and it does the rest.

Does it Work? Is it Useful?

Autopark works reasonably well. Sometimes it is finicky about when it detects a parking spot, requiring that you drive slowly enough and at the right distance from a potential parking place.

If I tell it to park, usually it can do it. A few times, I felt like it was going to hit either a curb or an adjacent car and I stopped it by pressing the brake. I don’t know whether it would have stopped, adjusted, and succeeded, or whether it would have hit something. I wasn’t willing to take the risk!

People, including me, are impressed when they see the car autopark. But it isn’t particularly useful because (manual) parking is quite easy given the excellent back camera with lines showing where the car is headed. I rarely use autopark because I can park faster myself with little effort and no risk.

Is it Safe?

While autopark is underway you are sitting in the driver’s seat presumably paying attention. You can stop it instantly by stepping on the brake. As I said, there have been a few times when I stopped autopark. Even if I had let it continue, it is a very low speed maneuver and only property would have been damaged.

Summon

What Is it?

Purchasing the “full self-driving capability” also adds two kinds of summoning, in which you use your phone to direct the car to move on its own out of a parking place.

The simplest capability is plain old “summon.” As the accompanying screen from the Tesla mobile app indicates, you can direct the car to move in the forward or reverse direction. The car will pull straight forward or back straight back, until you release the button or it detects an obstacle. This is useful when trying to get in or out of a very tight parking spot, one of those situations where you'd ordinarily have to contort your body to get in or out of the car.

The more advanced “smart summon” can navigate parking lots to come get you. This is ideal, for example, when it is raining and you realize that your umbrella is in the car. Simply press “smart summon” and the car drives to wherever you’re waiting.

Does it Work? Is it Useful?

Summon works and is useful in limited circumstances. Other than demonstrating the capability to friends, I’ve used it once in the six months I’ve owned the car, but if you park frequently in lots with narrow spaces you may find it more useful.

Smart summon works sometimes. The safety requirement to keep the car in sight at all times makes it unusable in multi-story parking garages where you’d really like to avoid the elevator or climbing multiple flights of stairs. In other situations, it seems to work well in some parking lots and not in others. Some Tesla owners have discovered that smart summon works best if the lot is mapped in OpenStreetMaps. Indeed, some owners are adding or improving maps for parking lots they use to improve smart summon.

Smart summon is currently a parlor trick. I’ve tried it a few times when it would have been useful, but it didn’t work well enough or the lot was too crowded for me to feel safe using it. Being somewhat cynical, I’m guessing that Telsa released smart summon in its present state primarily to recognize revenue for all the sales they made of “full self-driving capability”. Otherwise, I can’t understand why they wouldn’t call smart summon a beta capability.

Is it Safe?

Simple summon seems very low risk: You’re standing near the car and if you release the directional button on your phone the car stops essentially instantly. So, if it hits something, you’re not paying attention.

Smart summon is different. The car could be as much as 200 feet away from you in a parking lot busy with other cars and pedestrians. You’re supposed to have the car within sight at all times. There is a mode that requires you to keep a button pressed on your phone for the car to keep moving; I set that mode.

So, an attentive user should be able to stop the car in time to avoid an accident, but I worry that another (human) driver might round a corner too quickly for either car to be able to stop, causing an accident. More importantly, I worry that an absentminded pedestrian could walk in the way of the moving car. Of course, it should stop, but I have no experience to build confidence in the safety.

Auto Lane Change

What Is It?

When Autosteer is active, Auto Lane Change will move your car into an adjacent lane. You use the turn signal to request the lane change. The car decides when it is safe to change lanes and executes the move, including appropriate speed change to smoothly merge into the lane, all automatically.

Does it Work? Is it Useful?

It works well and is incredibly useful, especially on the highway. When I first got the car, the lane changes were unnaturally sudden with uncomfortable acceleration. But a software update a few months after purchase fixed all of that.

I have used auto lane change extensively during several long trips, including high-traffic stretches of multi-lane interstate highway. It works so well that I feel it lowers my stress level and reduces fatigue.

Is it Safe?

Again, I can’t give you statistics, but my experience has been very good. I’ve deliberately requested unsafe lane changes and the car waited until there was a suitable opening before moving.

Several times, during a lane change, the car moved suddenly back into the original lane. In each case, another fast-moving car weaving in and out of lanes was moving toward my destination and the car corrected even before I realized what was happening. In fact, the danger is that I, not yet realizing what was happening, would take back control of the car, thus defeating the car’s defensive move.

Complacency is another potential danger. Auto lane change works so well that it would be easy to fall out of the habit of checking the blind spot, etc.

Navigate on Autopilot

What is It?

Navigate on Autopilot, which Tesla labels a beta feature, is about as close as it gets to automated driving. It is aimed at long-distance driving: You tell the nav system your destination, then once you’ve reached a highway on-ramp, it will drive to the appropriate highway off-ramp, based on your destination, along the way suggesting (or doing) lane changes based on both traffic flow and navigation.

Does it Work? Is it Useful?

I’ll answer the second question first: It is useful. I use it for most of my highway driving. But it doesn’t all work, at least not reliably. But parts of it work well.

Lane Changes for Traffic Flow

The part that works well is the combination of autosteer, traffic-aware cruise control, and auto lane change, together with suggested lane changes based on traffic flow. Basically, this means that while you’re traveling on the highway, the car is driving itself, passing other cars when necessary (you can specify what that means), and moving out of the passing lane when other cars are approaching from behind at speed. You can choose whether to allow the car to make lane changes completely on its own, or whether it suggests a lane change and you confirm using the turn signal. I do the latter.

Often, the car will suggest a lane change to pass before I even recognize that I’m overtaking the car in front of me. This is nice because it avoids driving up to another car’s rear and then passing.

Lane Changes for Following the Route

The car also suggests (or makes, depending on setting) lane changes necessary to stay on the route. For example, this happens when an interstate splits with one branch continuing on the same highway and another branch merging onto a second highway. Sometimes this works fine; other times it suggests unnecessary or even incorrect lane changes; other times it fails to suggest necessary lane changes. And the system seems oblivious to lanes closed due to construction or accident.

Based on your destination, the car will also automatically take the appropriate highway exit ramp. In my experience, this is hit and miss. Sometimes it does it and sometimes not, which makes this feature pretty useless because I don’t know of any way to predetermine which it will be in each particular situation.

Is it Safe?

Navigate on Autopilot is really an integration of other capabilities I’ve already discussed with the nav system. To the extent that those other capabilities are safe, so is Navigate on Autopilot.

The new danger is that it all works well enough that one has to fight complacency. It is easy to let one’s attention wander and then to be surprised by a situation that the car can’t necessarily handle, like a blocked lane. As I’ve described above, the Tesla does give a series of escalating warnings if it doesn’t detect you applying a small amount of torque on the steering wheel.

Summary

It’s Awesome

Autopilot and “full self-driving capability” are awesome new capabilities in a car. But they’re not game-changers — yet. They make it easier to take long trips and I’m willing to believe that I’m overall safer when using them than I would be driving myself. Tesla should supply data for an independent, expert analysis of the safety tradeoffs.

As a techie, it is also tremendous fun to try these new capabilities and think about how hard it is to automate driving in real life. And to see the step-by-step progress that Tesla is making through frequent software upgrades.

It’s Fraught

Still, it is early days for a lot of this capability. I paid $6,000 extra for many features that either don’t work well or aren’t very useful in practice. But I knew this going into that purchase: I chose to buy into the future of the car as the software evolves. I won’t know for several years whether or not that was a good decision. But I’m encouraged by the pace of improvement coming with software updates. What I can’t tell is whether the fundamental system design is in place to let Tesla eventually turn my car into a really self-driving car.

Trust is the Key Issue

You’re driving at 60 mph well behind the car in front of you and you see red brake lights go on. Yet, the Tesla isn’t slowing down. What do you do? You could apply the brakes and begin to slow, or you could trust that traffic-aware cruise control will brake when it is really necessary.

You're traveling at speed in the right lane of a highway and you see a big truck barreling down the entrance ramp. Do you trust that either the truck will yield appropriately, or that the car will slow or increase speed appropriately, or change lanes to avoid a potential problem? (I’ve never seen the car change lanes anticipating traffic merging in from an on-ramp.)

There are tons of examples like this. Over time, one learns what one feels comfortable trusting and what one wants to control completely manually. So, I’ve learned to trust that traffic-aware cruise control is going to brake whenever necessary; unfortunately, it also occasionally brakes unnecessarily, sometimes requiring me to override it. I’ve learned to trust that auto lane change will safely change lanes. But I set the car so that I must confirm a lane change proposed by navigate on autopilot because it makes too many mistakes (not dangerous ones, but inconvenient ones).

Yes, Tesla repeatedly states that human drivers are responsible for the safe operation of the car. But in real life, all of the autopilot and self-driving features are useless if one doesn’t trust them, to some degree, to work.

Software Updates

Software updates complicate the trust equation. I’ve been impressed with the new features and the fixes that have been delivered by software updates. But, as we all know from the software world in which we live, updates can also break things, sometimes things that have nothing whatsoever to do with the supposed new feature or fix. It’s no big deal if is something in the entertainment system, but is is a very big deal if, after an update, traffic-aware cruise control no longer stops in time or auto lane change now will change lanes when there’s a car in the way. So, whenever there’s an update, I drive especially attentively until I rebuild my confidence.

Complacency is also a Problem

As airplanes became increasingly automated in the 1980’s, pilot complacency was recognized as a real problem. We are going through that again with automation of driving: Tesla’s automation is good enough that it takes real effort for a driver to stay focused and be ready to intervene effectively if need be. I’ve certainly experienced this myself.

Until automation can fully automate driving, careful attention must be paid to the human factors issues of a combined man-machine driving experience. Tesla has paid attention to it and I hope that they continue to do so, even as that might dilute their automation marketing message.